We spend hours of our day mindlessly scrolling on our phones on social media. While researchers and analysts have previously warned us about the mental repercussions of the same, there are physical aspects to doomscrolling. While it was difficult to measure the same before, it can now be quantified thanks to an AI model.

AI Discovers Adverse Effects of Doomscrolling on Physical Health

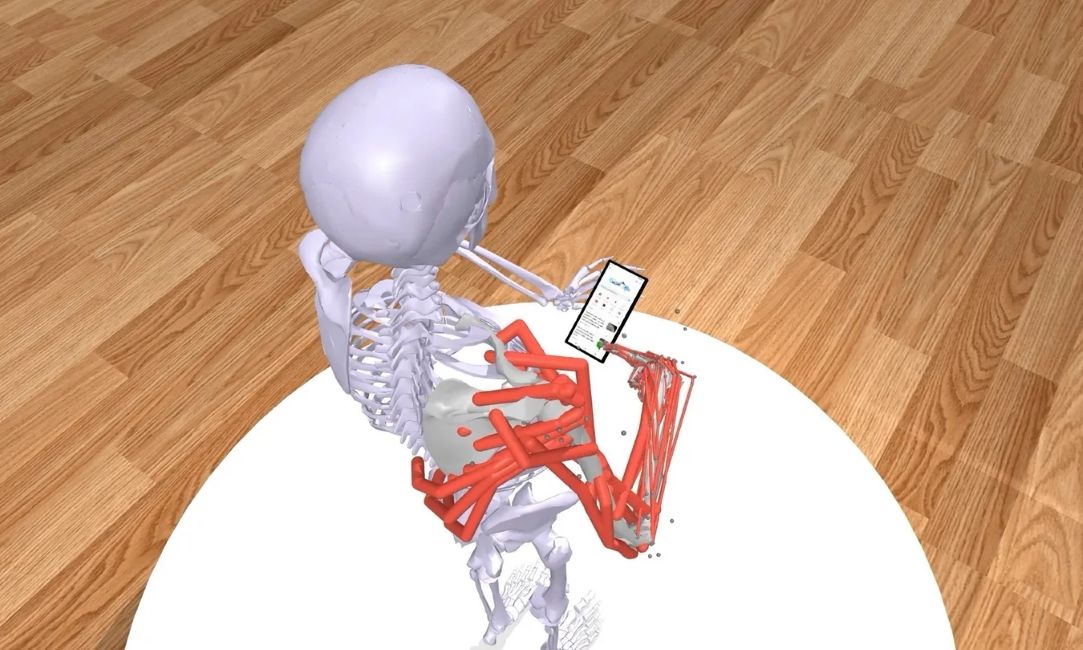

Developed by researchers at Aalto University in Finland and Leipzig University in Germany, Log2Motion is an AI-driven simulation tool that transforms static smartphone touch logs into dynamic biomechanical data. Instead of just looking at the coordinates on the screen, Log2Motion uses a reinforcement-learning-driven musculoskeletal model.

The problem with quantifying muscle interactions with the screen was that, while traditional models could easily tell where a user tapped, they lacked insight into how physically demanding the interaction was. However, Log2Motion changes that.

By combining the physics simulator with a software emulator, the system generates a virtual human model with bones and muscles that interacts with real mobile apps in real-time. As the hand replays and mimics logged user interactions, the AI calculates the speed, accuracy and biomechanical effort required for each interaction.

The Results are Scary, but There's Hope for the Future

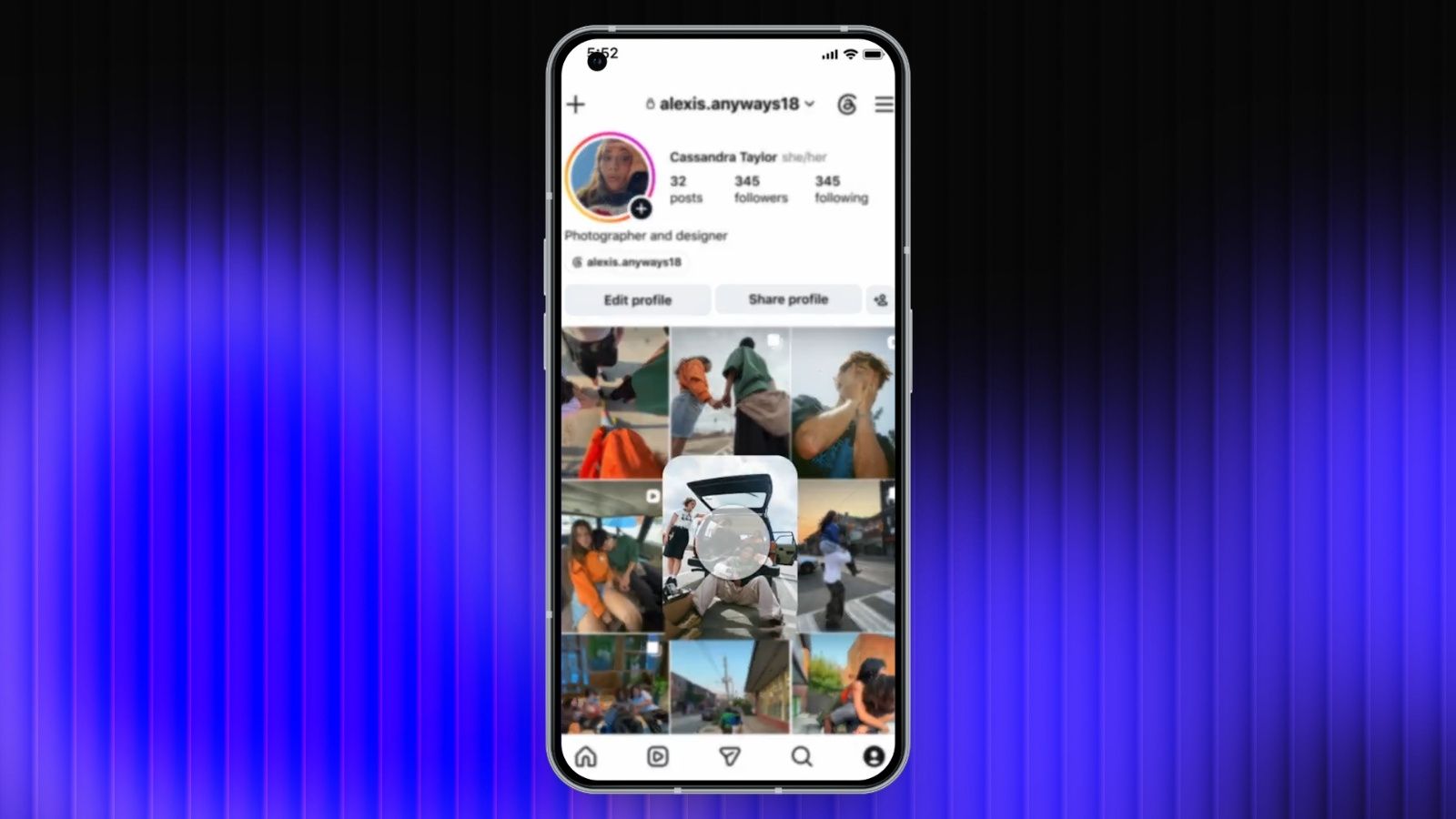

The data generated by Log2Motion was then studied, and researchers concluded that swiping up and down on platforms like TikTok and Instagram requires far more muscular effort than other movements.

Additionally, the AI also highlighted that users with large smartphones, when trying to reach the farther corners with one hand, exert lots of effort on their hands. As for single taps, while the amount of effort required is negligible, since single taps are common, they can add up, leading to more physical fatigue.

As bad as this may sound, there is a silver lining to this. Now that we finally have the means to measure and quantify physical exertion data, UX and UI developers can use it to improve their app layouts, making them easier on our hands. Log2Motion can help them design their UI around comfort early in the development pipeline.

Researchers also note that the model can be adjusted to simulate interactions for users with specific motor impairments such as hand tremors, reduced grip strength and prosthetics.

More information about Log2Motion will be presented at the CHI 2026 conference this April 2026. The model's capabilities will also be expanded, with the next iterations expected to simulate postures. Log2Motion's paper has already been published on ArXiv.