When you open Google's AI Edge Gallery app on a Pixel phone and try to run Gemma 4, the app only lets you run the on-device AI model via the CPU. There is no GPU or TPU option on a phone that is branded as an AI phone by Google. Basically, Google's own AI model can't run on Google's own Tensor accelerator via Google's own app. What do you make of it?

This led us to benchmark on-device AI performance across four flagship chipsets: Apple's A19 Pro, Qualcomm's Snapdragon 8 Elite Gen 5, MediaTek's Dimensity 9500 and Google's Tensor G5. The results explain why Android as a platform is losing on the on-device AI race despite higher TOPS numbers on paper. And interestingly, it has more to do with software than hardware.

How We Benchmarked On-Device AI on Flagship Phones?

To benchmark on-device AI performance, we ran Google's AI Edge Gallery app on four latest phones: iPhone Air (A19 Pro), the Samsung Galaxy S26 Ultra (Snapdragon 8 Elite Gen 5), the Vivo X300 Pro (Dimensity 9500) and the Pixel 10 Pro Fold (Tensor G5).

After that, we downloaded Google's Gemma 4 E2B AI model which comes with a built-in benchmark tool. It measures time to first token (TTFT, how long you wait before seeing a response) and decode speed (tokens/second, how fast it generates the output), among other things.

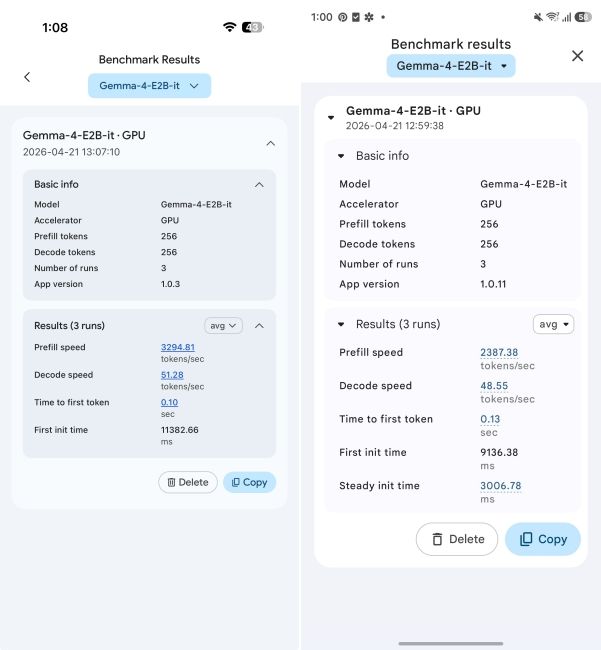

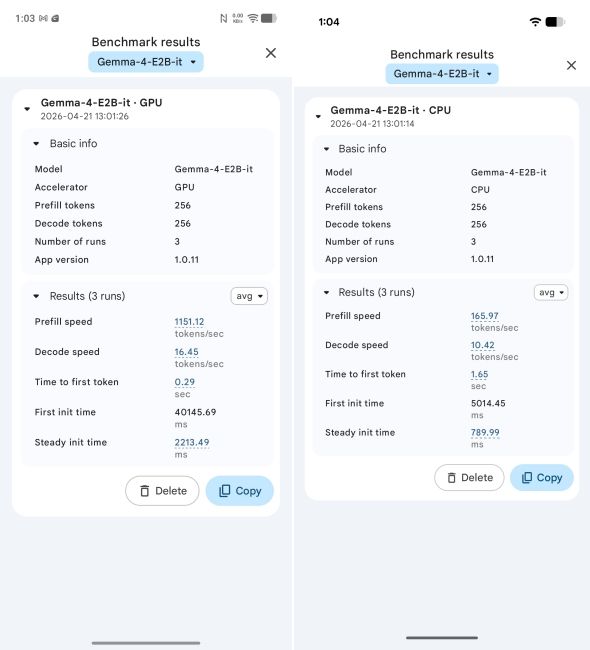

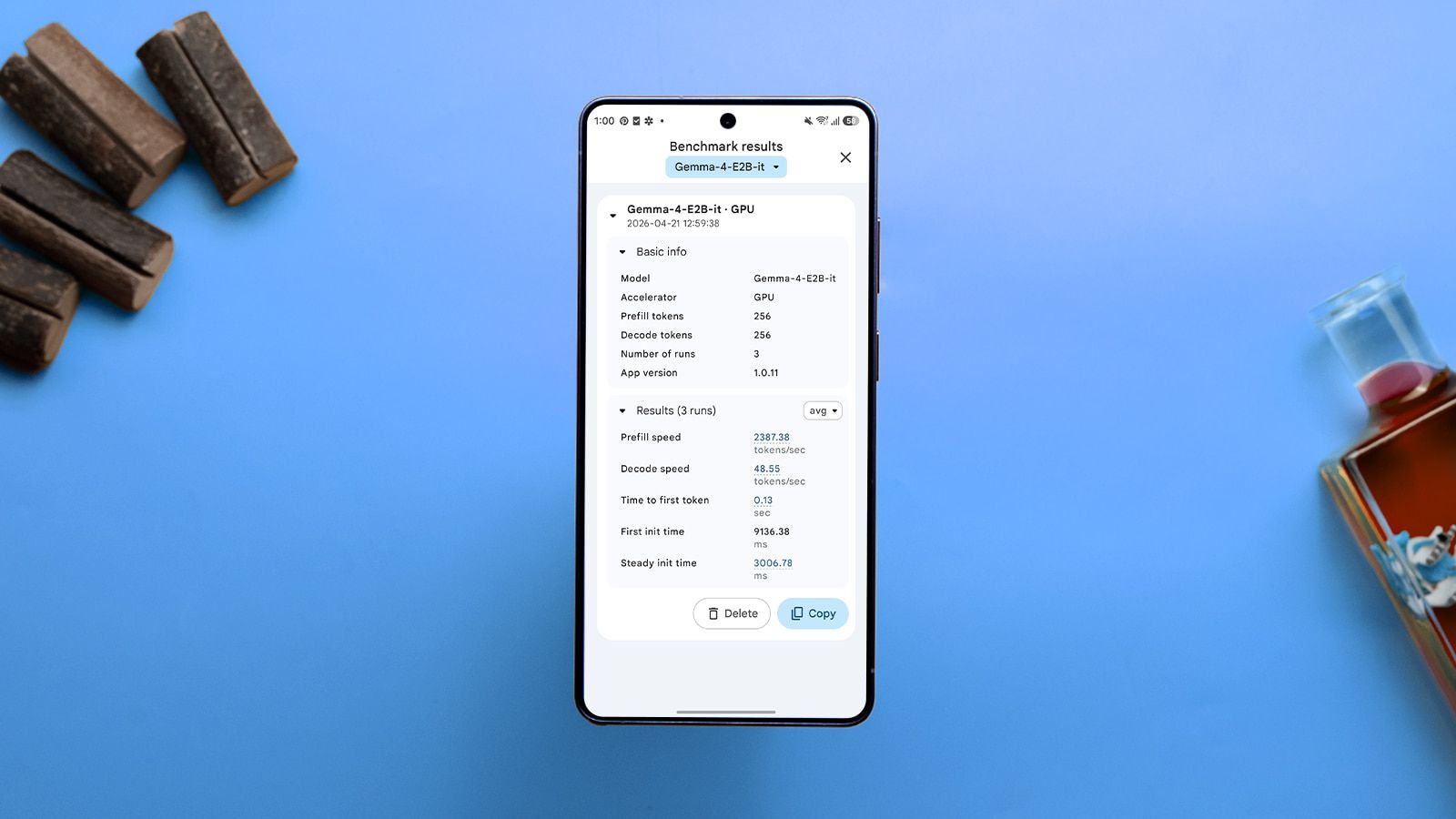

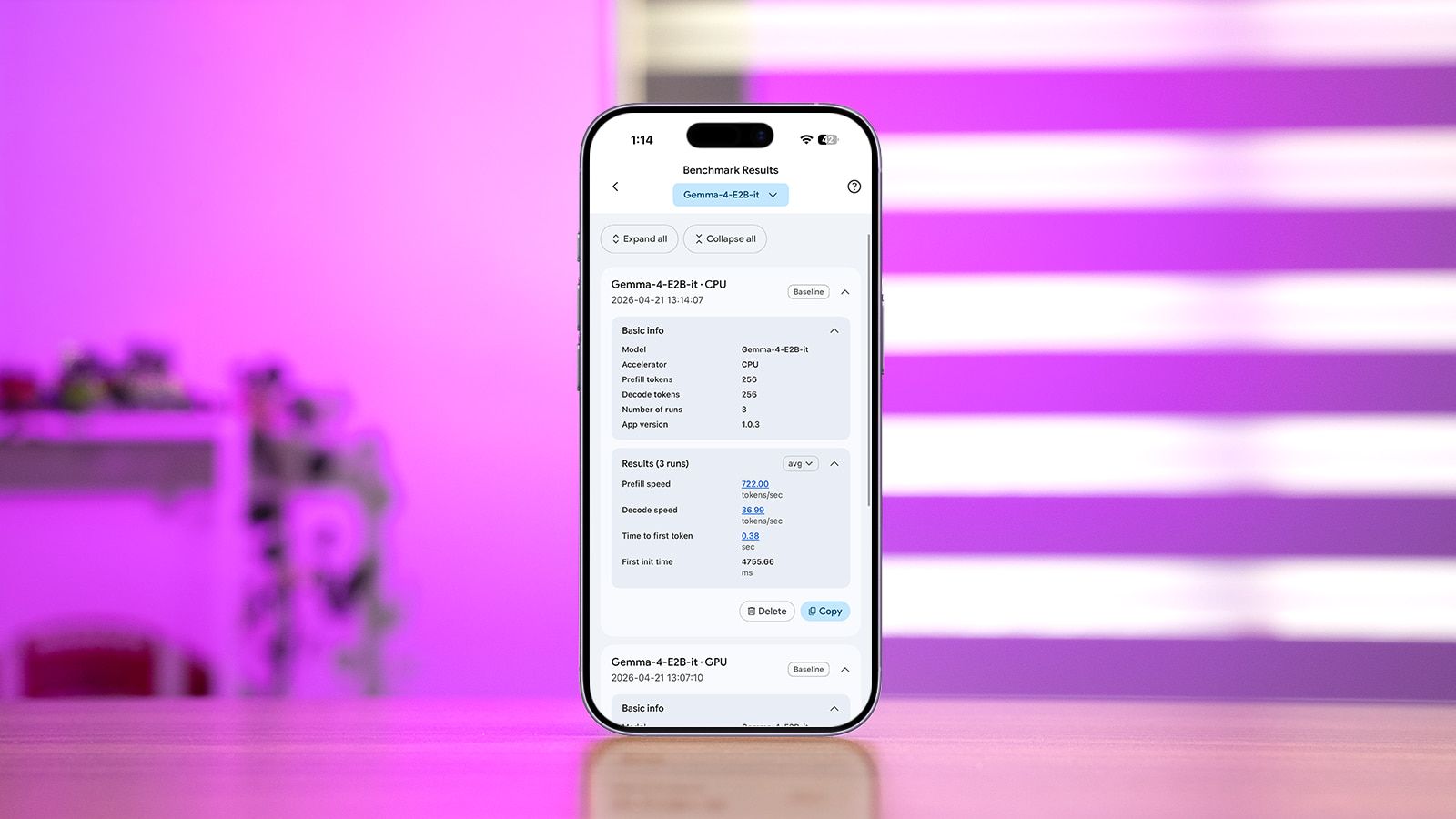

For every chip, we ran the benchmark with 256 prefill tokens and 256 decode tokens across three runs. On the iPhone Air, we tested both the CPU and GPU backends while on Android, we picked the GPU backend, except on the Pixel 10 Pro Fold which only offers the CPU option.

The Edge Gallery app runs on Google's LiteRT-LM engine under the hood on both Android and iPhone. And for better parity, we kept the same Gemma 4 E2B model and the same quantisation settings. This makes the results directly comparable.

On-Device AI Test Results: The iPhone Wins

First off, let's go through the full benchmark data across all configurations that we tested.

| Chipset | Backend used | TTFT (in second, lower is better) | Decode (in tokens per second, higher is better) |

|---|---|---|---|

| Apple A19 Pro | GPU | 0.10 | 51.28 |

| Apple A19 Pro | CPU | 0.38 | 36.99 |

| Snapdragon 8 Elite Gen 5 | GPU | 0.13 | 48.55 |

| Dimensity 9500 | GPU | 0.29 | 16.45 |

| Tensor G5 | CPU | 1.65 | 10.42 |

As you can see, the iPhone Air wins every metric across every comparison and it's not even close. And we are talking about the 5-core GPU on the iPhone Air. The top-tier iPhone 17 Pro comes with a 6-core GPU which would perform even better.

Now, coming to the benchmark results, the Apple A19 Pro GPU generates 51.28 tokens per second, which is around 5.6% faster than the Snapdragon 8 Elite Gen 5 chipset's 48.55 tokens per second. The decode gap and TTFT window are small and Qualcomm deserves credit here.

However, when you compare Apple's CPU inference results against the Dimensity 9500 and Tensor G5, the difference is stark. On CPU alone, the A19 Pro generates 36.99 tokens per second, which is 2.25x faster than the Dimensity 9500's GPU decode speed of 16.45 tokens per second.

MediaTek markets the Dimensity 9500 chipset as the industry's first 100 TOPS smartphone chip. However, its GPU-powered inference speed can't even match an iPhone's fallback option.

Google's Tensor G5 processor, powering the Pixel 10 Pro Fold, which is dubbed as "custom-built for Google's advanced AI" can't even use its GPU or TPU. We ran the test on the CPU and it performed the worst by a wide margin. Decode speed of 10.42 tokens per second is around 5x slower than the A19 Pro.

And time to first token (TTFT) on the Tensor G5 is 1.65 seconds, compared to the iPhone's 0.10 seconds. That is a huge 16.5x gap before the user sees any response on the screen.

Why Android Flagships Struggle?

Before we discuss the reasons, I want to point out that not a single Android chip in our test used the NPU or TPU. The Snapdragon 8 Elite Gen 5 has the Hexagon NPU, the Dimensity 9500 comes with the NPU 990 that claims to hit 100 TOPS (trillion operations per second) and the Tensor G5 has a custom-built TPU (Tensor Processing Unit) that Google markets endlessly.

But none of these hardware capabilities was available to use inside the Edge Gallery app. And that tells you that the problem lies with the software more than hardware.

With Android 15 in 2024, Google deprecated NNAPI (Neural Networks API) that was supposed to be Android's unified layer for on-device AI inference and NPU access. In its own migration guide, Google wrote "we expect the majority of devices in the future to use the CPU backend" for NNAPI workloads and recommended migrating to alternatives like LiteRT (formerly TensorFlow Lite).

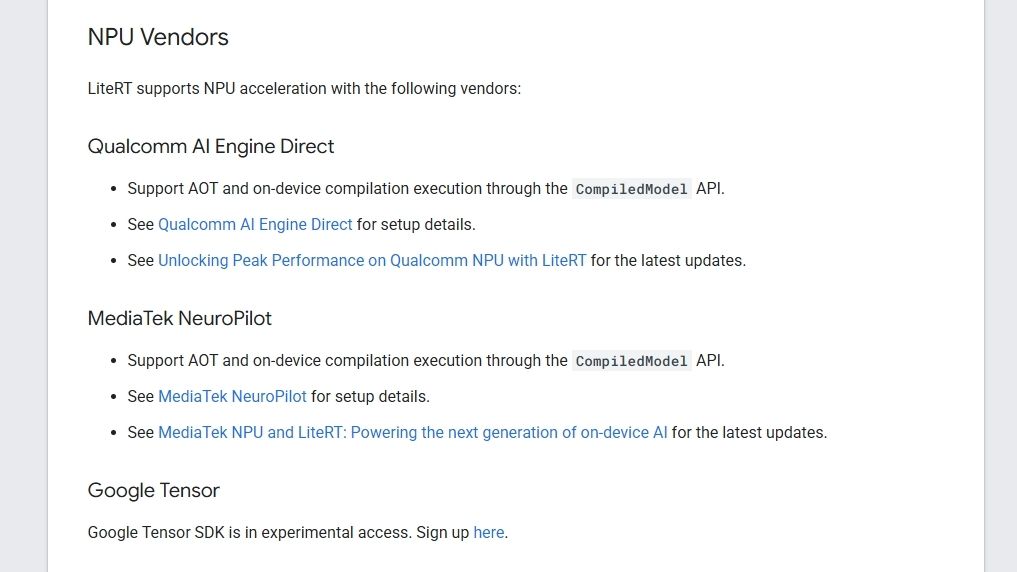

LiteRT was the replacement stack and its LiteRT-LM engine powers the Edge Gallery app. On phones, it runs on CPU or GPU. NPU support also exists, but it is patchy, vendor-locked and unavailable in consumer apps.

Due to the lack of a common layer, chipmakers started developing their own proprietary stack. Qualcomm has its own AI Engine Direct (QNN) stack for Hexagon NPU access, MediaTek has NeuroPilot for the NPU 990 and Samsung has ENN for Exynos chips. Each vendor ships its own SDK and toolchain and developers have to choose which SDK to target for specific chips.

As a result, most apps just skip the NPU entirely and fall back to CPU or GPU, which is what LiteRT-LM does in Edge Gallery. Recently, Google did partner with Qualcomm and MediaTek to integrate their SDKs into LiteRT, but we have not seen the improvements so far. The gap between what a chipset can do and what consumer apps offer is pretty large.

For example, even professional benchmark apps can't cut through this mess. Geekbench AI, developed by Primate Labs has direct access to chipmakers and they co-operate on many fronts. But again, the situation is quite fragmented. Geekbench uses Qualcomm's QNN on Snapdragon and Samsung's ENN on Exynos, however, there is no backend at all for the Tensor chip so Pixel phones default to CPU or GPU.

In my own Geekbench AI testing on smartphones, I have seen wildly inconsistent results and that's why I don't consider its AI scores. To sum up, if Primate Labs can't produce consistent Android AI scores with direct vendor access, a consumer app developer has absolutely no chance.

Google Tensor Can't Run Google's Own AI

Google designed its in-house Tensor chip, developed the LiteRT stack, trained the Gemma AI model and released the Edge Gallery app on Android, yet it finished last. The reason is that Google has not shipped a public TPU plugin for LiteRT that Edge Gallery can use. On the Pixel 10 series, even the GPU plugin is unavailable.

Google's own LiteRT NPU documentation lists Qualcomm and MediaTek for NPU compatibility, but for its own Tensor chip, it writes "Google Tensor SDK is in experimental access". Developers need to sign up for access. In fact, there is a GitHub issue on google-ai-edge/LiteRT from October 2025 asking how to run inference on the Pixel's TPU and the widely-accepted answer is to use the CPU or GPU.

This is Google's 5th-generation Tensor chip and the company has marketed them as custom-built chips for AI-first Pixel phones, but the public SDK story for the TPU is still in experimental phase. Meanwhile, Apple handed API access for its Neural Engine to third-party iPhone developers way back in 2017. It suggests that Google isn't serious about developing its own software stack for on-device AI on Android.

Apple's Edge is Tight Hardware-Software Integration

Unlike Google, which is wasting the opportunity despite owning the whole stack, Apple believes in deeply integrating the hardware with software. Apple has been building a unified software stack for nearly a decade. Core ML, Metal, coremltools and most recently, MLX have been developed to deliver consistent and seamless AI performance locally on the device.

Core ML is the on-device ML runtime and it can automatically choose between the CPU, GPU and Neural Engine based on what the device can handle. Metal, on the other hand, handles GPU compute with a mature driver stack and MLX is Apple's new framework for on-device AI on Apple Silicon.

Basically, the runtime targets the Neural Engine if the model is ANE-compatible or GPU, if it's a heavy AI workload, and CPU as the fallback option. It just works on the iPhone. On Android, developers have to pick between multiple software stack depending on the chipset so they simply choose the path of least resistance and mostly ship CPU-only inference.

On the hardware side too, Apple added Neural Accelerators directly into each A19 Pro GPU core for faster AI performance. In addition, Apple added SME units into the CPU, starting with the A18 Pro, to improve CPU-driven AI workloads. This kind of vertical integration is only done by Apple where both hardware and software are deeply integrated to take advantage of the actual hardware capability.

That's why we have several third-party apps on iPhone which target the Apple Neural Engine (ANE), GPU via MLX, Metal and Core ML.

The Hardware Works But the Software Doesn't

In my assessment, the hardware on Android phones we tested is actually capable, but the software path is broken. Qualcomm already demonstrated that its chip delivers competitive on-device AI performance. Google also uses its on-device Gemini Nano model on Android phones, but the benefit is not extended to third-party developers.

The larger point is that chipset manufacturers need to build the software stack first before doing marketing theatre and touting large TOPS numbers. It's meaningless if the OS or apps can't utilize the actual hardware capability. Apple doesn't even publish a TOPS figure, but it has quietly built the entire software stack for on-device AI.

And on-device AI performance is not something just for benchmarks, but it's consequential for the near-future. As small AI models are getting better, as we saw with Google's Gemma 4, users would love to chat and get things done locally on their device without having to subscribe to AI chatbots. On top of that, it's completely private and your chats are not sent to the cloud.

As I argued in my piece on whether AI on your phone is really free, the free AI features are a dependency trap to lock users into cloud ecosystems that can be monetised later via subscriptions. The only solution to avoid this trap is running open-source AI models locally on your phone. But as our benchmarks showed, Google is just not serious in creating a unified software stack for on-device AI on Android.

Sure, LiteRT is an important step, but Google has to streamline the software stack so that developers are not left out in a lurch and consumers can finally enjoy the hardware capability. Google has to build an ecosystem that no matter which chipset is powering your Android phone, you can always leverage the available compute.

.jpg)